The Apple II computer are unique in that not only was it the first home computer ever released to the mass market, it was the first computer released to support color graphics, all the way back in 1977. It worked by exploiting quirks in the NTSC color system called artifact color which TVs were attempting to suppress. The design of the Apple II was so solid that its color works rather well on almost anything that can accept a composite signal, even today. But the color method used did not translate to PAL countries and later improvements to color filtering could modify the colors shown. In this article, let's take a deep dive into how artifact color works on the Apple II and how it was adapted for systems where artifact color could not exist and how artifacts can change according to the display technology inside a display.

How Artifact Color Works

Artifact color is based on a quirk of the NTSC method of decoding color. A color NTSC signal is made up of three components, brightness/luma, color/chroma and sync. Brightness and sync were present when the NTSC defined as the black and white television standards in 1941. Color was added to by the NTSC to be backwards compatible with the black and white standard in 1953.

In color NTSC, a 3.58MHz color subcarrier sine wave is transmitted alongside a bandwidth-unlimited luma signal (generally 4.2MHz due to over the air broadcast limitations). While the luma signal was an analog waveform of varying voltage levels corresponding to the brightness of the image as captured. the color or hue of a chroma signal was determined by the phase of the 3.58MHz sine wave relative to a "color burst" reference phase and the saturation of the color was determined by the amplitude of the sine wave.

NTSC defined a system of 525 lines being displayed at 60Hz with the odd lines an image being displayed the first field of 262.5 lines and the even lines being displayed the second field of 262.5 lines. This interlaced display would alternate continually between odd and even fields, 60 times per second (Hz) for monochrome NTSC and 59.94Hz for color NTSC.

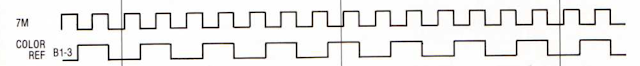

|

| TV Line Signal |

In color NTSC, each line would begin with a "back porch" and during this back porch a color burst signal of approximately 8 cycles of a 3.58MHz sine wave with a phase of 180 degrees would be transmitted. (Phase is defined as the sine of an angle, if you remember your basic trigonometry.) These cycles provide the signal to the TV that color information would be displayed on this line. Then as the line traveled across the screen, the sine wave would phase shift relative to the burst to define the color that the display should be displaying at that point on the line. The phase shifts can be plotted on a color wheel according to the sine of the phase, so 180 degrees is something of a greenish-yellow, 270 is close to cyan, 360 is blue and 90 is light red. (Some diagrams like the one above refer to the color burst as phase 0).

The color NTSC standard defines each line as having 227.5 color clocks or phase shifts per line, and this includes portions of the screen which are not visible and have no corresponding luma signal. You might think the resulting color would have poor resolution, but the human eye is much more sensitive to changes in brightness than color. The luma signal has greater definition and, because color is being overlaid onto luma, the combined signal can give great color detail than the limited phase shifting of the color signal can on its own. Also, a consumer-grade standard definition CRT television has a horizontal definition of about 250 horizontal TV lines, so the actual horizontal resolution of a CRT is not that detailed.

|

| NTSC Color Wheel Phases |

When a display device, whether it is a TV camera, a VHS or DVD or a game console is manipulating the phase of the color signal directly, we call this "direct [NTSC] color." The phase shifting in this case is not only intentional but directly caused by the video source. But one property of NTSC is that when the luma frequency is significantly greater than the chroma frequency, the NTSC decoding process can erroneously decode high frequency luma as chroma. Moreover, repeating patterns tend to display as solid color. This is the key to how artifact colors can be generated.

Color on NTSC Apple II Systems

The Apple II series consists of six computers, all of which can display NTSC composite artifact color : Apple II, Apple II+, Apple IIe, Apple IIc, Apple IIgs & Apple IIc+. The 8-bit NTSC Apple IIs are the only computers which rely exclusively on artifact color, they cannot generate direct color. In other words, it has no control over the phase of the color signal. The Apple II supports three graphics modes, Graphics Mode, High Resolution Graphics Mode, and in the later systems, Double High Resolution Graphics Mode. The High Resolution Graphics Mode is the easiest to explain and the mode most often used to produce color on an Apple II, so let us start there.

|

| Apple II Color Reference to Pixel Clock Compariosn |

High Resolution Graphics Mode displays 280 pixels x 192 lines. Pixels can be individually turned on or off in this mode, but they are either white or black. Each memory byte allowed for defining individual pixels in 7-bits of an 8-bit memory location As this pixel clock is twice the NTSC color frequency, one of two artifact colors can be selected from a pair of on and off pixels. The colors which were produced were green and purple when the Apple II was first released.

|

| Two Hi-Res Pixels and the Color Cycle, half-pixel periods shown |

|

| Graphics Mode Monochrome |

Double High Resolution Graphics Mode displays 560x192 pixels. In double high resolution mode, each half of a byte defines the color of a group of four contiguous horizontal monochrome pixels and all the colors which the Apple II can display in the lower resolution Graphics Mode are available. Essentially Double High Resolution Graphics is what you would get if you had finer control of the pixels in Graphics Mode, but it required significantly more memory than Graphics Mode, more than what was affordable in 1977. Double High Resolution Graphics requires an Apple IIe with an Extended 80-column Text Card installed and a revision B or later motherboard, or a IIc, IIc+ or IIgs. The Extended 80-column Text Card also unofficially permits a Double Low Resolution Graphics Mode with an 80x48 resolution.

Background and border colors on an Apple II are always black with the exception of an Apple IIgs simulating the 8-bit graphics modes. The Apple IIgs shows a black background in graphics modes but can show a blue border. Text modes are white on blue on the IIgs instead of white on black.

The color burst signal is disabled in text modes (40-column or 80-column by 24 rows) for all but the first revision of the original Apple II, so text in these modes will appear in monochrome without color fringing. However, text characters displayed in Mixed Text/Graphics Modes, either with High Resolution Graphics or Low Resolution Graphics (taking up the bottom 32 lines/4 rows), will show color fringes on the text on a color monitor or TV. Any screenshot showing no color fringing on the text in these modes is an emulator feature and not authentic to original hardware (at least not on a composite monitor).

The genius of this artifact color system is "free color" on standard displays of the day. Instead of implementing palette registers and lookup tables for color values or delay lines where colors can be chopped up into discrete phases, the Apple II relies solely on the color burst and data values underlying the pixels to produce color and makes the TV or monitor do the rest. Colors are stable (by NTSC standards) and can be placed almost anywhere on the screen you want. In other words, a program can draw an orange line diagonally across the screen and the programmer can be assured that the line will appear as some form of orange on any color monitor or TV set.

Color on Apple II PAL Systems

PAL color was developed around a decade after NTSC color and has certain advantages over NTSC. The most important is that the phase is reversed every line and the comparison of the phases between two lines tends to eliminates color errors from phase misalignment except in extreme cases. Thus high frequency luma is not going to be misinterpreted as chroma (and PAL has a higher luma bandwidth of around 5MHz compared to NTSC). For this reason, artifact color cannot be generated on a color PAL display as it can on a color NTSC display. Artifact NTSC colors must be converted into direct PAL colors.

While there were other computer systems that supported artifact color and were released in PAL countries or had very similar PAL machines (Tandy Color Computer and Dragon 32/64 lines, Atari 8-bit line, Atari 7800, IBM CGA clones) none bother to translate NTSC artifact color into PAL direct color. These PAL machines show monochrome graphics when their high resolution modes were used to display NTSC artifact color.

There were PAL Apple IIs starting with the Europlus (derived from the II+) and continuing to PAL and "NTSC International" versions of the IIe. Apple had supported PAL frequencies since the Rev. 1 motherboards, which were rather early in the Apple II's lifecycle. The Europlus did not output color on its own, it was strictly monochrome 50Hz out of the box. The Europlus could be fitted with the PAL Color Encoder Card in Slot 7 to translate the pixel patterns of the Apple II graphics modes to represent the colors that would have been displayed on an NTSC display on a PAL display. The PAL Color Encoder Card would tap into the video signal, convert every pixel into a 4-bit value, assign the correct color and generate a PAL-compatible 4.43MHz color signal running at 50Hz with colors assigned according to the pixel patterns. The resulting display may not have been perfect, but it worked.

Color on the Apple IIe was a little weird. The Apple IIe PAL motherboard implemented the PAL Color Encoder Card on the motherboard , so you could get PAL color with a normal PAL color TV or monitor. In fact, it may even have an improved signal compared to the Europlus II and its PAL Color Encoder Card

The "International NTSC IIe" motherboard could output color, but it uses a color clock frequency of 3.56MHz and a refresh rate of 50Hz. It was released in the Platinum IIe style with the numeric keypad in Australia and New Zealed and without the keypad in Europe. Only Apple's own monitors supported a 3.56MHz color signal at 50Hz. Regular color NTSC monitors did not like the 50Hz and may not have found the slightly-off color frequency to be a valid color signal. PAL monitors would not recognize the 3.56MHz color signal, being far off the standard 4.43MHz.

With both the PAL and International NTSC IIe motherboards, the Auxiliary Slot was moved from the regular NTSC boards to align with Slot 3. The Extended 80-column memory cards for the PAL and International IIe motherboards plug into both the Auxiliary Slot and Slot 3, requiring a single signal from Slot 3.

|

| Apple IIe NTSC International Motherboard |

There was //c PAL variant released. The PAL //c could have color added to it by plugging in the PAL Modulator/Adapter into the port where the LCD was plugged into. The LCD port had the appropriate video-related signals from which the video could be reconstructed. It also supported, out of the box, the color monitors sold by Apple like the Apple IIe NTSC International motherboards.

Perhaps the best explanation for the reason for the NTSC International machines was that the method of conversion to PAL invariably added dot-crawl visual artifacts. The NTSC International systems, when paired with the 220/240V Apple Color Monitor IIe, do not exhibit dot crawl issues.

Apple IIgs systems sold outside NTSC countries had a setting to change between 50Hz and 60Hz but with a few exceptions, their composite video output NTSC color only. There may have been some systems sold for schools in Australia which had a daughterboard mounted on the video chip to permit PAL color output.

PAL Apple IIs are not clocked lower than NTSC Apple IIs, all Apple IIs run the CPU at 1.02MHz. This means that programs do not run slower due to a slower clock speed, as PAL C64 may run slower than an NTSC C64 (.89MHz vs. 1.02MHz). Nor do music and sound effects run at a slower tempo or lower pitch if not corrected for a lower speed because the Apple II has no software timers available, all music is timed by CPU clock cycles. Games often do not appear to run slower, the video hardware of the Apple II has no vertical blanking interrupt or even a way to poll for vertical blank until the IIc, so it was up to the programmer to keep the graphics in sync with the display, As very few Apple II games tend to update the display anywhere near 60 times per second, graphics should fit comfortably within 50 frames per second but tearing may be present on moving objects.

Filtering and Artifact Color

Inside every TV is a demodulating circuit that separates the luma signal from the chroma signal. However, any signal separation is imperfect and will lead to issues. S-Video's chief innovation over composite video was to always keep the signals luma and chroma signal separate so they could not interfere with each other. S-Video eliminates artifact color because the necessary mixing of chroma and luma and their resulting quirks of separation are no longer present. Various filtering methods are used with composite video to try to reach the result of S-Video, but they cannot achieve perfect separation.

The most common method in use to separate the signals during the Apple II's lifetime (1977-1993) was to use a notch filter combined with a bandpass filter. The bandpass filter only looks to the area around the 3.58MHz range to capture the chroma signal while the notch filter, which is a kind of band stop filter attempts to capture all but the signal in the 3.58MHz area (0-4.2MHz), preserving more luma information than a crude low-pass/high-pass method.

The notch filter was about as sophisticated a filtering method as encountered in most composite color computer monitors and TV sets up to the late 1980s. The Apple ColorMonitor IIe a.k.a the AppleColor Composite Monitor IIe and the AppleColor Composite Monitor IIc as well as the Commodore 1084s use notch filters.

The next innovation in luma/chroma composite signal separation was the delay line. With the two line delay system, the monitor would store one line of a signal, extract the luma and chroma from it while the next line was being sent. Because two vertically adjacent lines tend not to have wildly varying colors, when the mostly inverted phases of the color carriers of the two lines are combined they will reduce or cancel each other out leaving much less affected luma than by just using a notch filter.

The two-line delay system was an improvement but it was not perfect and some systems improved on it a little by using a three-line delay system. High frequency horizontal color detail can bleed into the luma channel, leading to "dot crawl". Additionally, sharp changes in luma can lead to rainbow artifacts and color bleed. To reduce these unsightly artifacts, a more sophisticated adaptive two-dimensional (2D) three line adaptive system was invented to vary the color comparisons over three lines as they were being drawn to best reduce dot crawl. Finally, three-dimensional (3D) motion adaptive comb filters compare the lines of the previous frame with that of the current frame to virtually eliminate dot crawl.

Comb filters work by assuming the signal follows the NTSC rules. Of course, the Apple II signal does not follow the NTSC rules in several respects. First, the Apple II video signal is progressive rather than interlaced. Second, the Apple II does not shift the phase of every other line, the phase starts at the same point in time on every line. This tends to make comb filters behave a little strangely on the Apple II. Because comb filters have to combine the color signal of every pair of lines, they tend to cause a loss of vertical detail. In the Apple II, this detail can result in the blending of alternating single color lines.

|

| Karateka with Notch Filter |

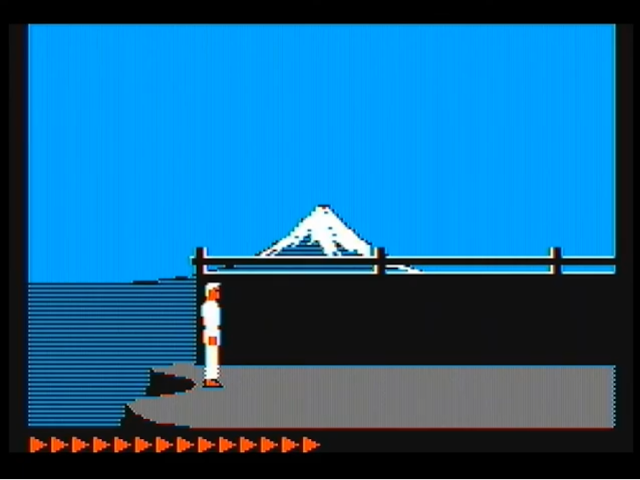

Consider this screenshot of Karateka. The ground is being drawn, in canonical Apple II colors, as alternating blue and orange colors. The black and white image (quite commonly seen on the green screens of the day) shows a checkerboard pattern.

|

| Karateka in Monochrome (raw pixel) |

Now consider the pattern through a typical two-line comb filter :

|

| Karateka with Delay Line Comb Filter |

As you can see, the lines have been blended together to show a gray which would not ordinarily register to the eye as gray. Perhaps the crappiest vintage color TVs may blend these lines together to make a solid gray, but that TV would make for a crappy computer monitor. As blue and orange rely on pixels which are vertically adjacent to each other by one pixel, when you combine them you could get a white signal but due to luma being off over 50% of the line, you get gray instead. The same thing would happen for alternating green and purple lines. However, you can get different colors by mixing colors which are not 90 degrees out of phase with each other.

Finally, let's consider how a 3D adaptive motion comb filter would handle this image :

|

| Karateka (Side B) with 3D Motion Adaptive Comb Filter |

As you might note, the sophisticated comb filter has done its job too well here, eliminating virtually all the color, which it sees in this instance as a rainbow artifact to be canceled out. In fact, we have come full circle to reproduce, as well as a capture card can, the original dots making up the video signal! The lack of evenly sized pixels and the resulting scaling artifacts is a symptom of composite/s-video capture cards which only sample composite video at 13.5MHz. As the pixel clock is 7.19MHz, you would need a sample rate of 14.318MHz to fully capture all the pixels without scaling artifacts.

If you look at the back of the box in which Karateka was sold, you should see a gray color to the ground, suggesting that comb filtering was not unknown in the 1980s. But this is not the only example of probable reliance on comb filtering. Consider the title screen to the Bard's Tale :

|

| The Bard's Tale - Notch Filter |

|

| The Bard's Tale - Delay Line Comb Filter |

Now the Bard's hat is a solid color pink and the listener's hat has a green color that is different from the normal green (see the Bard's pants) and a purplish-blue-ribbon that is not the normal purple or blue hue. If you used a display with a motion adaptive 3D comb filter, you would see dots instead of lines or solid color, something that is definitely not ideal.

A Quick Note about Tandy Color Computer Artifact Color

The NTSC Tandy Color Computer 1 and 2 produce artifact color almost identically to the High-Resolution Graphics Mode of the Apple IIs. The differences are that there is only 256 horizontal displayed pixels on the CoCos vs. 280 pixels on the Apple IIs (by 192 lines). Only the blue/orange artifact color combination is available for white pixels, there is no bit that induces a half-pixel phase delay to change blue/orange pixels into purple/green pixels. The CoCo permits green pixels to be used instead of white pixels, which generate greenish-brown artifact colors, but that is the limit of the CoCo's capability to generate direct color in a mode which can produce artifact color. Finally, the phase offset between luma and chroma is in one of two random positions when the CoCo is booted, so the first color pixel on a line could be blue or orange. Programs would tell the user to press the reset button, which would cycle to the next phase offset and reverse the blue/orange colors, if the color at boot was incorrect. The CoCos had many low resolution modes which used direct color and had more colors available, but artifact color did see use in over 100 games. The CoCo 3 has backwards compatibility with the Coco 1 & 2 modes as well as its own higher resolution, more colorful graphics and supports RGB video as well as composite video. One game (Where in the World is Carmen Sandiego?) may support artifact color using those more advanced graphics with it.

Very detailed post, thank you! At last I understand why some emulators have to add a "blurred lines" chroma feature, that's to simulate comb filters. I also need to find a way to test 50Hz NTSC on my PAL IIc. I don't think any of my composite converters would understand it.

ReplyDeleteA few things I noted:

- In mixed mode (graphics+ 4 lines of text), the chroma burst is actually disabled for text lines but the NTSC monitors don't stop decoding colors anyway. It seems that's in the NTSC standard so that interference in TV broadcast don't result in mixed B&W and color lines (in the case of a corrupted/missed chroma burst). The result is that while the Apple II actually disables color for text lines, the TV or monitor still displays them in color, resulting in color fringes. This is not the case for PAL Apple IIs, because the PAL modulator immediately detects the lack of chroma burst and disables color for text lines in mixed mode.

The generation of PAL video in later Apple IIs is explained in "Understanding the Apple IIe" by Jim Sather.

- A PAL Apple II runs at 1.016 Mhz to fit the needed frequency for PAL generation, and so is slower than an NTSC Apple II, which is clocked at 1.0205 Mhz.

- VSYNC polling/interrupt was introduced with the IIc but it is possible to poll the start and end of VBLANK for sync on IIe (Prince of Persia does it), useful for double buffering. It was also possible to unofficially sync with the screen on the earlier Apple II/II+ using "shadow bus". I heard some games used it and the recent "Apple II Cycle-Counting Megademo" by VMW Productions also sync with the display this way.

- The Apple IIs don't just stop displaying out of the graphics/text window, they actually trigger HBLANK and VBLANK, so nothing could be displayed anyway. This was changed with the IIgs of course.

Just a small correction. The NTSC color burst signal is not gated by the presence of text. Text is generated by TEXT + MIX * V4 * V2 (ie. TEXT being set, or MIX being set and the beam being in the lower 1/8th). But the color burst is gated only by TEXT' * HBL * H3 * H2 (TEXT being clear and the beam being in the correct horizontal position). Any time there are graphics on the screen, the color burst is present on every scanline.

DeleteBy carefully manipulating the TEXT softswitch during HBLANK you can force the color burst on or off (eg. for a color text screen or mono graphics screen). Enabling and disabling the color burst multiple times during a single frame leads to unpredictable results, depending on the monitor.

It's not totally clear if the PAL IOU uses the same CLRGATE logic at the NTSC60 IOU but I suspect it does.

ReplyDeleteThank-you for the discussion of artifact colours on the Coco -- you anticipated my question/comment. :-)

as you're probably aware, it is possible to use "vapor lock" techniques to figure out the vertical blank on the Apple II+/IIe, so it's possible to do various cycle-counted race-the beam type effects that work fine on NTSC apples but break horribly. For example: https://www.youtube.com/watch?v=McKAcKVTIQs

ReplyDeleteThis is an incredibly informative page, thanks! It turns out the Apple II Europlus (or Euromod) outputs the same 50Hz color NTSC (3.56Mhz) as 'PAL' Apple IIc and International NTSC Apple IIe. And pairs up fine with 220/240V Apple-brand color composite monitors. I found a video which shows this:- https://www.facebook.com/groups/5251478676/posts/10161279612868677

ReplyDeleteAnd a screenshot:- http://www.cvxmelody.net/Super_Bunny_Apple_II_Europlus_direct_to_240V_AppleColor_Composite_Monitor_IIe_A2M6021X.jpg

Are you aware the Karateka Digital Comb Filter.png image displays upside down? Is that by mistake?

ReplyDeleteIt is not a mistake. It displays upside down relative to the normal way the game is played, but if you look closely, the elements are not mirrored as they should be if I had flipped the image 180 degrees...

DeleteThere is one machine - ostensibly Apple-compatible (indeed, it was the first licensed Apple-compatible!) - that attempts to do artefact colour directly on the PAL; the ITT 2020. It's not completely compatible, because the increased colourburst frequency requires the use of 360, rather than 280, dots across the screen, so all 8 bits of the graphics word are used (and another one has to be found from somewhere) and there's no half-clock shift bit; and because of the PAL system, colours have to be derived in a 2x2 block of pixels. But the upside is that high resolution mode can produce all 16 colours at once, rather than being limited to just 6.

ReplyDeleteSadly the ITT 2020 achieved very little success. Perhaps even then people were disenchanted with "sort of" compatibility?

(It's described here: https://archive.org/details/PersonalComputerWorld1980-11/page/64/mode/2up)

Hello, I have a question: on the image showing the low resolution color palette, one can see "vertical lines" inside the color bars. After hours on writing a digital NTSC decoder, I still can't explain where they come from. Do you know why these vertical lines are there ? (on my real A2e, these lines are not there either, so I guess the monitor does something but I can't find what). My best guess is that the luma channel interfere with the chroma one. For example, if I low-pass filter the composite signal down to 3.58MHz, the lines disappear. But since 3.58MHz is very low compared to what NTSC allows, I find it rather strange to do that...

ReplyDelete